For decades, we struggled with what AI researchers call the representation problem.

Human concepts are so intuitive to us that we forget how strange they are. Music, faces, language, emotion: how do you explain to a machine what makes something “music”? You can’t just list rules. Music breaks its own rules constantly. The concept lives in some subliminal space that humans navigate effortlessly but couldn’t articulate if you held a gun to their heads. It’s imprecise and often non-conforming, that’s the abstract space in which human knowledge exists and develops.

How do you represent human knowledge to machines? More importantly, how do you make it understand it?

The challenge initially wasn’t teaching machines to think. It was teaching them to recognize patterns at all. To distinguish signal from noise. To notice that this pattern of pixels appears when humans say “cat” and that pattern when they say “dog.” This arrangement of words is spam while that one isn’t. This medical scan shows a tumor. This sequence of sounds is the word “hello.” This pattern of financial transactions signals fraud. This combination of user behaviors predicts they’ll click. This weather pattern suggests rain tomorrow.

Pattern recognition. Pattern classification. Teaching machines what our concepts even are. Not in any deep sense, but functionally. Teaching them to recognize and recreate the patterns that define our world.

This was harder than anyone expected. It was just the beginning.

The Lines

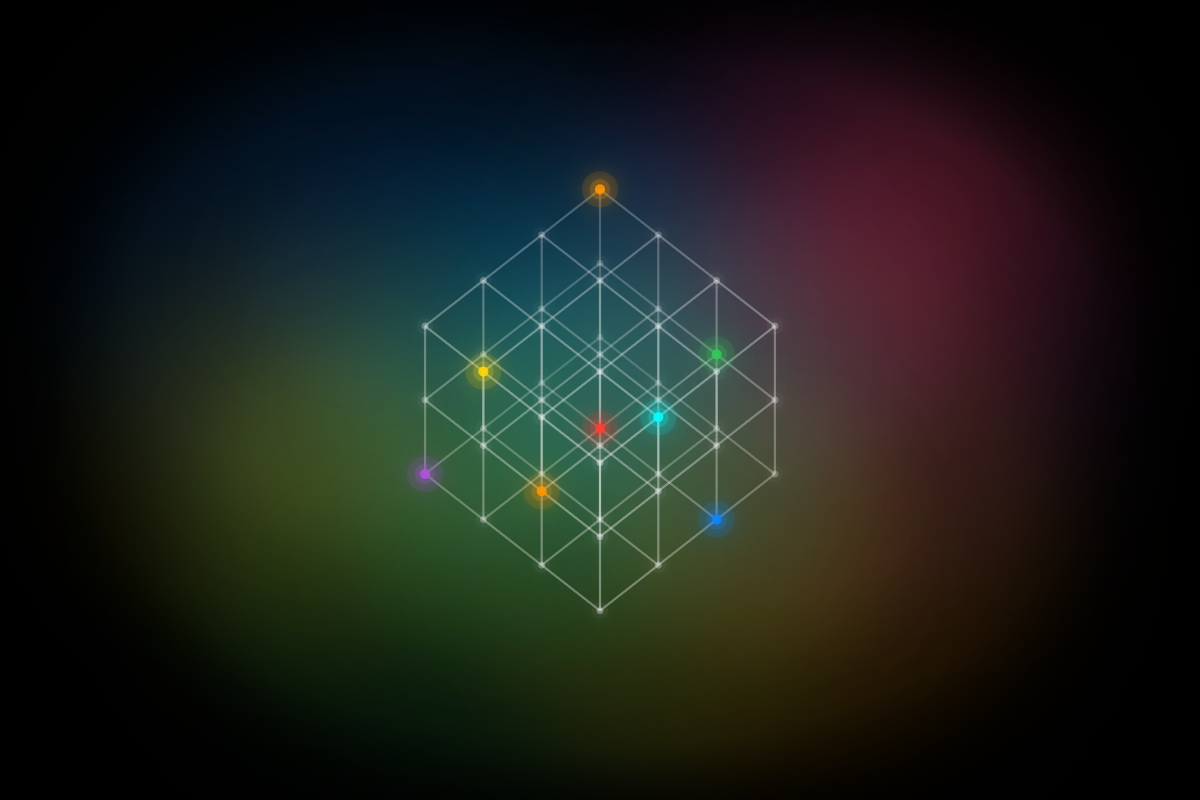

I want you to imagine a visual device for thinking about human knowledge: a grid of lines.

Each line represents a conceptual dimension. A domain of human understanding. Mathematics is a line. So is music. Biology. Ethics. Cooking. Quantum mechanics. The history of trade routes. Philosophy. Economics. Linguistics.

These aren’t arbitrary categories. Each line represents a distinct way of thinking about the world. A framework, a set of methods, a vocabulary, a tradition of inquiry. Humans have spent millennia drawing these lines, refining them, teaching them to new generations. These lines are not defined exactly. They vary by culture, geography, language, age, generation, and even from person to person who share all other traits.

I’ll use these lines as a visual tool to analyze some aspects of human concepts. It’s not meant to be a model that represents human concepts fully.

Now look at where the lines intersect.

Start simple: where mathematics meets music, you get acoustics and rhythm theory. But now add more lines to that same point. Bring in electronics and you get synthesizers and digital signal processing. Add physics and you get the science of instrument construction. Add psychology and you get the study of how rhythm affects cognition and emotion. Add computer science and you get algorithmic composition and generative music systems. This single node, where mathematics, music, electronics, physics, psychology, and computer science converge, is where today’s speakers and sound systems exist. Entire fields of study and innovation compressed into the devices we use every day.

Look at another node: where biology meets medicine. Add chemistry and you get pharmacology. Add ethics and you get bioethics and medical ethics. Add engineering and you get biomedical devices and prosthetics. Add data science and you get precision medicine and genomics. Add physics and you get medical imaging technologies. This intersection of biology, medicine, chemistry, ethics, engineering, data science, and physics isn’t just a theoretical cluster. It’s the reality of modern healthcare. Every diagnosis, every treatment, every hospital visit exists at this convergence point. The MRI scan, the prescribed medication, the pacemaker, the genetic test. All products of this massive multidimensional node we encounter whenever we walk into a doctor’s office.

Consider where chemistry meets cooking. Add physics and you get the thermodynamics of cooking. Add biology and you get nutrition science and fermentation. Add industrial design and you get kitchen equipment engineering. Add materials science and you get novel cookware and food packaging. Add anthropology and you get the cultural evolution of cuisine. Add economics and you get agricultural supply chains and the food industry. The field we call “molecular gastronomy” sits at this rich intersection where chemistry, cooking, physics, biology, industrial design, materials science, anthropology, and economics all meet.

Or where psychology meets economics: add sociology and you get behavioral economics and market psychology. Add neuroscience and you get neuroeconomics. Add anthropology and you get economic anthropology and the study of how different cultures approach value and exchange. Add mathematics and you get game theory and decision theory. Add technology and you get the attention economy and digital behavior design. Add philosophy and you get questions of utility, happiness, and what it means to make rational choices.

The grid isn’t just two-dimensional. It’s multidimensional. A lattice where hundreds of conceptual lines converge in countless ways. And the most interesting innovations don’t happen where two lines cross. They happen where five, or seven, or ten lines converge simultaneously, creating nodes of astonishing complexity and possibility.

Every great innovation is, in some sense, a node on this lattice. Every patent, every breakthrough paper, every invention that changed how we live. A point where existing lines of thought crossed and sparked something new.

The history of human progress is the history of drawing new lines and discovering what happens when they intersect.

What Machine Learning Gave Us

The breakthrough of machine learning, before the current AI explosion, before ChatGPT and its cousins, was teaching machines to work within each of these lines.

Pattern recognition. Pattern recreation. Given enough examples of music, a model could recognize some music. Given enough examples of faces, it could recognize faces. Given enough examples of spam, it could recognize spam.

This was genuinely remarkable. We’d finally cracked the representation problem, at least for very narrow and specific domains. Machines could understand individual lines. Not in the way humans understand them, but functionally. They could operate within a conceptual dimension.

Speech recognition. Image classification. Recommendation systems. Fraud detection. These aren’t trivial achievements. They represent decades of work on teaching machines to navigate the lines humans had drawn.

But these systems were specialists. A model trained on music knew music. A model trained on images knew images. They lived on their respective lines, unable to cross from one to another (with exceptions).

The Generative Leap

Then something changed.

Large language models and generative AI, the technology behind today’s AI assistants, didn’t just learn to recognize patterns within individual lines. They learned to recognize patterns across them, mainly because the wealth of recorded human knowledge is interdisciplinary and cross-references fields and concepts.

An important acknowledgment: everything AI does, it does by learning from the accumulated work of human civilization. It’s entirely derivative in that sense. Built on patterns extracted from what humans have created and what the natural world exhibits. But this is also how human knowledge works. We don’t create in a vacuum. Newton famously wrote of “standing on the shoulders of giants.” Every human breakthrough builds on what came before, absorbing and recombining the insights of previous generations. The difference with AI isn’t that it learns from existing knowledge. We all do that. The difference is the scale and speed at which it can traverse that knowledge space.

This is the leap that makes current AI feel different. It’s not just recognizing music or recognizing text or recognizing code. It’s recognizing the relationships between music and text and code and everything else in its training data.

The result: these systems can work at intersections far more effectively than pure ML models could.

Want to know what concepts from fluid dynamics might apply to urban traffic flow? AI can make that connection. Curious whether there are unexplored parallels between evolutionary biology and market economics? It’s already able to make those connections. Looking for that obscure technique from textile manufacturing that might solve your materials science problem? It’s able to weave that intersection.

This is why current AI surprises us. It’s not doing something completely alien. It’s doing something humans do. Finding connections between different ways of thinking. But it’s doing it at a scale and speed that sometimes reveals connections we never noticed.

Exploring the Space

Back to our visual device.

Imagine a simple grid: three lines running one direction, three perpendicular. That gives you nine intersection points. Nine nodes where different ways of thinking might combine.

Now ask: how many of those nodes can a single human explore?

Not many. Humans face constraints. Time. Energy. Training. Specialization. Interest. You might spend your career at the intersection of music and mathematics. Someone else explores biology and ethics. Another person works where cooking meets chemistry.

Realistically, across all the constraints of a human life, you might deeply explore three or four intersections. Maybe five if you’re exceptionally curious and long-lived.

But the lattice of human knowledge isn’t three-by-three. It’s thousands of lines by thousands of lines. The number of potential intersections is enormous. Humans, collectively, have explored only a fraction of them.

Here’s what AI changes: it could explore vastly more nodes than any human ever could.

No specialization bias. No limited lifespan. No need to choose one career path over another. It’s absorbed the patterns of every line humans have drawn, and it can systematically traverse the lattice, looking for combinations that might be useful.

This is why today’s AI can feel like magic. It finds intersections that no human thought to explore. Not because they weren’t interesting or worthy, but because no human happened to have the right combination of training and curiosity and time to stand at exactly that crossroads.

What Intersections Look Like

Some examples of what I mean by working at intersections.

Human-discovered intersections:

- Music + Mathematics → acoustics, the physics of sound, algorithmic composition

- Biology + Ethics → bioethics, the moral questions around life sciences

- Chemistry + Cooking → molecular gastronomy, the science of flavor

- Psychology + Economics → behavioral economics, understanding irrational choices

- Art + Engineering → industrial design, architecture, user interface design

- Philosophy + Mathematics → formal logic, proof theory, the foundations of reasoning

AI-enabled intersections:

- Protein structure + Gaming → Foldit, gamifying scientific discovery

- Linguistics + Neuroscience + Statistics → the mathematics underlying language models

- Historical textile techniques + Modern materials science → novel composite materials

- Ancient philosophy + Cognitive science + Product design → frameworks for digital wellbeing

- Epidemiology + Graph theory + Game theory → pandemic spread modeling

- Musical structure + Biological data → sonification of proteins and genomes

These AI-enabled intersections aren’t necessarily ones that AI invented. But AI makes them tractable. Makes it possible to explore them at scale, to find the useful patterns, to translate insights from one domain to another without requiring a human who happens to be expert in both.

The Question

Here’s what keeps me thinking: all of this happens within the existing lattice. Every intersection AI finds, every novel combination it suggests, every surprising connection it makes.

AI is phenomenal at connecting dots. The question is whether it can draw new lines.

What do I mean by a “new line”? I mean a genuinely novel conceptual dimension. Not a combination of existing ideas, but the introduction of a framework so fundamentally different that it creates an entirely new axis along which to think.

Consider what Newton did with calculus. He didn’t find an intersection on the existing lattice. He drew a new line. A new way of thinking about change and motion and accumulation that didn’t exist before. Every future intersection involving calculus was made possible by that new axis.

Or what Simone de Beauvoir did when she reframed gender as socially constructed rather than biologically determined. Creating an entirely new dimension for understanding human identity and power. What Ibn Khaldun achieved in 14th century North Africa by creating the framework for understanding social dynamics and historical cycles. Arguably founding sociology centuries before European scholars. What ancient Indian mathematicians did by conceiving zero not as absence but as a number. A conceptual revolution that transformed mathematics itself.

Consider Kimberlé Crenshaw’s intersectionality, showing how systems of oppression overlap and compound. W.E.B. Du Bois introducing double consciousness to describe the psychological experience of marginalization. The ancient agricultural revolution when humans first conceived of deliberately shaping the natural world. The invention of writing systems across multiple civilizations, each creating a new dimension for preserving and transmitting thought across time.

Darwin with natural selection. Marie Curie revealing radioactivity and the transmutation of elements. Showing that matter itself could transform in ways previously unimaginable. Einstein with spacetime. Turing with computability. bell hooks connecting race, class, and gender in ways that revealed new dimensions of social analysis. These weren’t clever combinations of existing ideas. They were new dimensions of thought that expanded the very space in which ideas could exist.

Creating new lines is what humans do. It’s what makes progress possible, and is also crucial to our species’ survival in an ever-changing world. Without new lines, we’d eventually exhaust the intersections of our current lattice. We’d run out of novel combinations.

The Missing Capability

Think about what’s happened so far in the evolution of AI:

Stage 1: Machines couldn’t understand our concepts at all. We struggled to teach them what “music” or “face” or “language” even meant.

Stage 2: Machine learning cracked the representation problem for individual domains. Machines could recognize and recreate patterns within the lines. Within single conceptual dimensions.

Stage 3: Large language models learned to work across existing lines, finding patterns at intersections. They could traverse the entire grid, combining concepts in ways humans hadn’t explored.

Each stage felt like a breakthrough. Each stage was a breakthrough. But notice what’s missing: none of these stages involves creating new lines.

AI has gone from not understanding our lattice, to understanding individual lines, to traversing the whole lattice efficiently. What it hasn’t done, what would mark the next fundamental leap, is expand the lattice itself.

This, I believe, is what separates current AI from true artificial general intelligence. Not better intersection-finding. Not faster traversal. But the ability to create genuinely new conceptual dimensions.

Why Lines Are Hard

Think about what it takes to draw a new line on the lattice.

It requires stepping outside the lattice entirely. Seeing the existing framework as a framework. As one possible way of organizing thought among many. It requires asking: what if there were another axis altogether?

This kind of thinking often emerges from profound discomfort with existing paradigms. Darwin’s theory emerged from struggling to reconcile geological evidence with creationist frameworks. Quantum mechanics was born from the failure of classical physics to explain certain phenomena. Relativity came from taking seriously a contradiction that other physicists had dismissed.

New lines often start as problems. Cracks in the lattice. Before becoming new dimensions.

Can AI experience that discomfort? Can it recognize when an intersection doesn’t quite work, when something is fundamentally wrong with the lattice itself, or when new data emerges leading to a malalignment within previous concepts?

Current systems are optimized to find coherence in their training data. They’re trained to work within the patterns humans have already created. That’s different from being able to recognize when those patterns break down.

There’s also the question of embodiment. Many breakthrough conceptual frameworks emerged from physical experience. From direct engagement with the world in ways that generated genuinely novel phenomena to explain. AI processes representations of the world. That’s a fundamentally different relationship.

Possibility and Practice

I want to be careful here. I’m not saying AI can’t create new lines. I’m saying it hasn’t yet, and we don’t know how it would.

Theoretically, there’s no law of physics that says intelligence must be biological. If creating new conceptual dimensions is something minds do, and if minds can exist in silicon as well as carbon, then in principle AI could create new lines.

But practically, we don’t know how to build that.

Current architectures are optimized for pattern recognition across existing data. They’re interpolation engines, however sophisticated. Creating new lines might require something different. Different training, different architecture, different relationship to the world.

Or it might emerge spontaneously as systems get larger and more capable. We don’t know. That uncertainty is part of what makes this moment interesting.

Implications

If this framework is right, if the distinction between intersection-finding and line-drawing captures something real, then it clarifies what current AI is and isn’t.

What AI does exceptionally well:

- Traverse the entire lattice of human knowledge

- Find unexplored intersections

- Apply insights from one domain to problems in another

- Work at nodes that no human had time or training to explore

- Accelerate the exploration of our existing conceptual space

What AI hasn’t demonstrated:

- Creating genuinely new conceptual dimensions

- Paradigm-breaking insight that expands the lattice itself

- First-principles thinking that introduces new axes of thought

This isn’t a criticism. Intersection-finding at civilizational scale is genuinely transformative. We have centuries worth of unexplored nodes on our current lattice. AI making those accessible is not a small thing.

But it does suggest where the real threshold lies. The difference between a very good tool and something we’d call genuine artificial intelligence might not be speed or capability or knowledge. It might be the ability to draw a line that didn’t exist before.

The Open Question

So: can AI create new lines?

I don’t know. Neither does anyone else, whatever they claim.

What I believe is that this is the right question to ask. Not “can AI write code?” or “can AI create art?” or even “can AI be creative?” Those questions are already answered—yes, within the existing grid, often impressively so.

The question that matters is whether AI can expand the lattice. Whether it can introduce conceptual dimensions that humans hadn’t imagined. Whether it can do what Newton and Darwin and Einstein did—not just work within frameworks, but create new ones.

If it can, we’re looking at something genuinely unprecedented. A partner in the deepest work of human civilization. Expanding the space of what’s thinkable.

If it can’t, if line-drawing is somehow uniquely biological, or uniquely tied to embodied experience, or requires something about consciousness we don’t understand, then AI remains an extraordinarily powerful tool for exploring a fixed space.

Either way, understanding the distinction matters. The intersections are ours to explore together. But the lines, the new dimensions of thought, those determine whether the space keeps growing.

And that, ultimately, is what I’m watching for.

The lattice metaphor has been useful. It builds intuition. It helps us see what AI does exceptionally well. Traversing intersections. And what it hasn’t yet demonstrated. Creating new conceptual dimensions.

But like all models, it’s a simplification. A way of organizing thought that makes certain things visible while obscuring others. And the most interesting questions might be precisely the ones the framework can’t answer.

So I’ll do what any honest examination requires: question the very model I’ve just built.

What the Lattice Can’t Capture

Human concepts aren’t actually discrete lines. They aren’t even planes. They’re clouds. Infinite-dimensional spaces that blur and overlap in ways we can’t visualize. There are no sharp boundaries where “mathematics” ends and “music” begins, where “biology” stops and “ethics” starts.

Human knowledge doesn’t live on a neat lattice. It exists in the overlaps of infinite-dimensional spaces. Continuous regions of understanding that blend and merge in ways that resist any simple mapping. The lattice metaphor helped us think, but it also constrained our thinking.

And here’s the deeper possibility: humans might never actually create new lines.

What I’ve been calling “new lines” might not be new dimensions at all. Calculus, natural selection, gender as social construct, intersectionality. They might be expansions of our perception across dimensions that already existed. The space of possible knowledge might be already infinite-dimensional, and human progress isn’t about creating new axes but about discovering more of what’s already there.

In this view, Newton didn’t create calculus. He discovered a way of seeing change and motion that had always been possible to think about. Humans just hadn’t learned to perceive it yet. Simone de Beauvoir didn’t create gender-as-construct. She revealed a dimension of human experience that had always existed but that we’d been unable to articulate. Every “breakthrough” is just pushing our awareness further into an already-infinite conceptual space.

If that’s true, then what is AI doing when it traverses the lattice? It’s doing exactly what humans do: expanding awareness across an infinite-dimensional space of knowledge. The only difference is scale and speed.

And if there are no “new lines” to create, only an ever-expanding understanding of infinite dimensions, then AI becoming truly intelligent isn’t about achieving some qualitative leap. It’s about getting vaster. Absorbing more data. Having access to more capabilities. Making more connections. Expanding across more dimensions of this infinite knowledge space.

At some point, when AI’s understanding spans enough of these dimensions, when it can perceive patterns across enough of this infinite-dimensional space, perhaps we’ll simply start calling it intelligent. Not because it crossed some threshold from “intersection-finder” to “line-creator,” but because the distinction was artificial all along.

True intelligence, human or artificial, might not be about creating new dimensions. It might be about the continuous, endless work of expanding awareness through dimensions that were always there, waiting to be perceived.

And that means the path to artificial general intelligence isn’t necessarily some mysterious breakthrough we’re waiting for. It might just be more. More data, more parameters, more connections, more dimensions perceived.

Which is either the most hopeful or the most unsettling possibility of all: that the intelligence we’ve been trying to understand might not require crossing some threshold we can barely imagine. It might just require getting vast enough to perceive what was always there.

A Note on Method

This essay has done something deliberate. I built a framework. Lines, lattices, intersections. And used it to develop intuition about where AI is and what it might become. The model is useful. It clarifies. It helps us ask better questions.

Then I broke it down. Not because I was wrong to build it, but because no model is complete. The lattice metaphor captures something real about AI’s capabilities and limitations. But it also imposes structure on something that might be fundamentally more fluid, more continuous, more infinite than any lattice can represent.

This is how understanding works. We build frameworks to think with, then we question those frameworks to think beyond them. The goal isn’t to arrive at a final model but to keep refining our questions.

Can AI create new lines? Can it expand the lattice? Or is the lattice itself an illusion? A useful fiction that helped us think but that ultimately obscures more than it reveals?

I don’t know. But the frameworks we build to understand intelligence, and our willingness to dismantle them when they constrain us, might matter more than any answer we arrive at.